Strategic Evolution of AI Analytics using AI-ready Data Platforms

Abstract

Life sciences organizations are rapidly moving from experimental AI pilots to production scale, agent-driven research workflows. As Model Context Protocol (MCP) based architectures gain traction for orchestrating queries across compound and target databases such as ChEMBL, BindingDB, and PubChem, performance constraints that were once tolerable in proof of concept environments are emerging as material bottlenecks at scale.

Scientific discovery is inherently iterative and exploratory. Researchers routinely ask semantically similar questions across related targets, compounds, and chemical classes. Yet most AI enabled research platforms continue to execute each query as a discrete operation, repeatedly invoking the same external tools and APIs. The result is escalating latency, rising infrastructure costs, and a widening gap between AI system capabilities and the expectations of real time scientific decision making.

This challenge is becoming acute as organizations deploy multi-agent systems, expand federated data access, and integrate AI more deeply into early discovery and lead optimization workflows. Without architectural mechanisms to recognize and reuse prior computational work, agentic platforms risk becoming slower, more expensive, and harder to operationalize precisely at the moment when speed to insight is becoming a competitive differentiator.

We address these challenges through a prototype, a semantic caching framework implemented as a Model Context Protocol server that realizes Cache Augmented Generation for life sciences discovery workflows. By intelligently recognizing semantic equivalence across aggregated queries that span ChEMBL, BindingDB, and PubChem, the system avoids redundant re-execution of federated tool calls. This work demonstrates that Cache Augmented Generation architectures can operationalize agentic AI systems at the scale and speed required for competitive drug discovery.

1. System Overview and Performance Characteristics

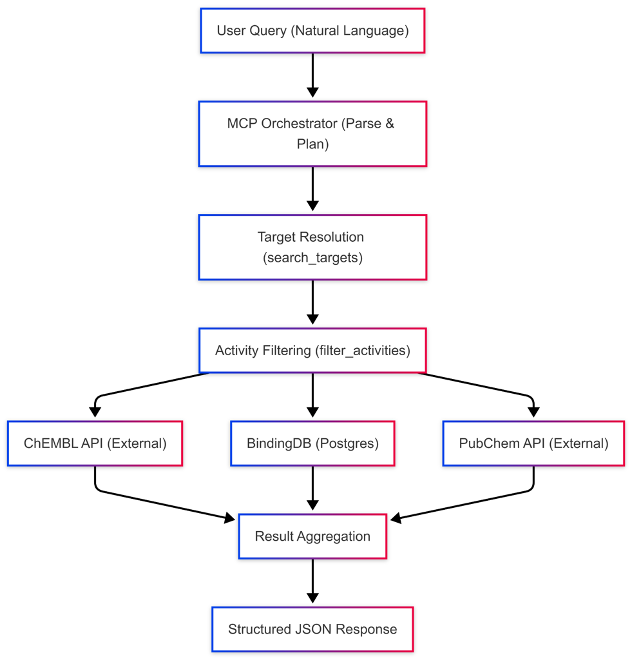

We implemented a Model Context Protocol (MCP) server to federate compound and bioactivity queries across three data sources: ChEMBL accessed via external APIs; BindingDB stored locally in a PostgreSQL database; vector similarity search is performed in application code rather than via pgvector indexing; and PubChem accessed via external APIs. Collectively, these sources comprise over 3 million compounds with associated bioactivity, structural, and pharmacological annotations.

User queries are expressed in natural language and typically specify a biological target and a potency constraint. For example: “Find compounds targeting PRMT5 with IC50 < 10 nM.” The MCP orchestrator decomposes each query into a sequence of tool calls. First, a target resolution step maps the query term (e.g., PRMT5) to canonical identifiers. Next, activity filters are applied to retrieve compounds meeting the specified potency threshold. Results from ChEMBL, BindingDB, and PubChem are then aggregated and normalized into a structured JSON response.

Each query requires multiple downstream operations, including external API calls and local vector based retrieval. In aggregate, end to end response times typically exceed 10 seconds. While this latency is acceptable for isolated queries, it becomes a limiting factor in exploratory research workflows. Researchers frequently issue semantically similar queries that differ only in thresholds, target variants, or contextual framing, yet each request triggers a full re-execution of the same resolution and retrieval steps.

This design leads to repeated computation and redundant data access for closely related queries, constraining interactive use and motivating investigation into mechanisms for reusing prior query results.

2. The Hypothesis

We hypothesize that caching query-response pairs at the LLM interaction layer can meaningfully reduce redundant computation and latency in MCP-based federated query systems. In the current implementation, the system persists the LLM-formatted response alongside the tool name, arguments, and query embedding, enabling semantic reuse when sufficiently similar queries are detected. This approach provides measurable latency reduction for paraphrased and repeated queries. A further evolution of this hypothesis, caching at the granularity of individual intermediate tool executions such as target resolution and compound retrieval, is identified as a direction for future work. That model would enable partial reuse across structurally different queries that share underlying data requirements, but is not implemented in the present systems. This approach follows the principles of Cache Augmented Generation (CAG), but its effectiveness is expected to vary based on query similarity, data freshness, and reuse frequency, motivating systematic evaluation of cache performance across diverse query patterns.

Semantic Caching: Mechanisms and Limitations

Semantic caching can be applied by comparing new user queries against previously executed queries using embedding similarity and reusing cached final responses when similarity exceeds a threshold. For example, queries such as “PRMT5 inhibitors with IC50 < 10 nM” and “Show PRMT5 compounds below 10 nanomolar” are semantically equal from the same cached result. The system converts each query into a vector embedding, stores embeddings alongside responses in a vector database, and performs similarity search for subsequent queries to determine cache hits. This approach is effective for paraphrase handling, conversational queries, and reducing repeated downstream calls without requiring exact string matching.

to potential false positives. For instance, a cached query such as “PRMT5 inhibitors with IC50 < 10 nM” may be incorrectly reused for a new query like “PRMT5 inhibitors with IC50 < 10 nM and CNS penetration”. Although embedding similarity may be high, the additional pharmacokinetic constraint materially changes the required result set. Threshold tuning can mitigate but not eliminate this risk, and because only final responses are cached, the system lacks awareness of which intermediate assumptions remain valid. These limitations motivate more granular caching strategies that operate at the level of tool executions or constraint aware components rather than entire responses.

3. Cache Augmented Generation: From Response Caching to Tool Level Reuse

Cache Augmented Generation represents a progression from caching final answers toward caching intermediate computational artifacts. In our current implementation, we realize the first stage of this progression. The logical next stage caching intermediate computational artifacts is described in this section as a target architecture. While the term originally described persistence of model key-value caches for context reuse, we apply the CAG principle at the tool execution layer to address scalability constraints inherent in life sciences data federation.

Limitations of KV-Cache Based CAG for Federated Data

Traditional CAG implementations persist the model’s internal representation (KV-cache) after processing a bounded dataset, enabling subsequent queries to reuse this computational state without re-ingesting data. For example, loading a curated set of 5,000 PRMT5 inhibitors into model context and caching the resulting attention states allows rapid responses to multiple analytical queries against this fixed dataset.

However, this approach is infeasible for production life sciences workflows where:

- Datasets exceed context window limits (ChEMBL: 2.3M+ compounds; PubChem: 100M+ compounds)

- Data is federated across heterogeneous APIs (ChEMBL, BindingDB, PubChem, proprietary databases)

- Query scope varies dynamically (target specific vs. pan assay analysis)

- Data freshness requirements preclude static snapshots

Tool Level CAG via Semantic Caching

In our current implementation, Cache Augmented Generation is applied at the query-response layer. When a user query is processed, the system stores the LLM-formatted response alongside the tool identifier, tool arguments, and query embedding. Subsequent semantically similar queries retrieve this cached response directly, bypassing both the MCP tool call and the LLM formatting step. This provides measurable performance gains for repeated and paraphrased queries.

The logical next stage of this architecture, caching at the tool execution boundary, where raw API outputs are stored and reused independently of how questions are phrased, is described below as a target design. This distinction between the current response-level implementation and the proposed tool-level design is important for interpreting the performance results and limitations discussed in subsequent sections.

Illustrative Example: PRMT5 Inhibitor Analysis

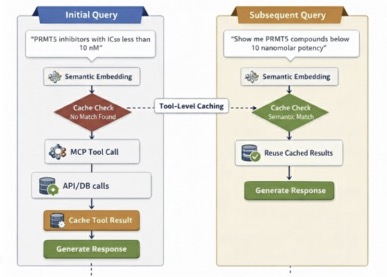

Initial Query: “PRMT5 inhibitors with IC50 less than 10 nM”

The system:

- Embeds the query semantically

- Finds no similar cached query

- Executes MCP tool call to ChEMBL API: get_targets(target=’PRMT5′) and filter activities for thershold (ic50_max=10)

- Initial Query step “Caches: query embedding, tool name and arguments, and the LLM-formatted response” & Subsequent Query step “Reuses cached LLM-formatted response without re-executing the MCP tool call or LLM formatting step”

The system:

- Embeds the new query

- Detects semantic similarity to cached query above the 0.85 threshold

- Reuses cached LLM-formatted response without re-executing the MCP tool call or LLM formatting step

Key Distinction of CAG from Response-Level Caching: The current CAG implementation caches the LLM-formatted response at the MCP tool-call level, meaning the cache entry is tied to a specific tool invocation and its arguments. This enables reuse across differently phrased questions that map to the same tool call. True compositional reuse where partial tool results serve multiple structurally different queries is the intended next stage and is described above as future work.

Computational Advantage:

Response Level Semantic Cache (traditional approach):

What it caches: The final formatted answer to a query

Limitation: Only works if you ask essentially the same question again i.e.,

- Query 1: “PRMT5 inhibitors with IC50 < 10 nM" → caches the answer

- Query 2: “What’s the bioavailability of PRMT5 inhibitors?” → Cache miss! Different question, can’t reuse

Even though both queries need the same underlying PRMT5 data from ChEMBL, the cache can’t help because the questions are different.

Tool Level CAG

What it caches: The raw ChEMBL API response (the list of PRMT5 compounds with IC50 < 10 nM)

The current implementation achieves reuse for semantically equivalent or paraphrased versions of the same question. For example,

- ‘Show me PRMT5 inhibitors’ → fetches from ChEMBL, caches the LLM-formatted response ‘Show PRMT5 compounds’ → semantic cache hit, returns cached response directly.

- Both questions receive the same answer without a second tool call because their query embeddings score above the 0.85 similarity threshold.

True compositional reuse, where a single cached dataset answers structurally different questions such as ‘What is the average IC50?’ and ‘Which compounds have good bioavailability?’, represents the intended future behavior of a tool-level caching architecture and is not present in the current implementation.

KV-Cache CAG (not feasible)

- What it would do: Load all PRMT5 data into the LLM’s context, cache the model’s internal attention states

- Why it fails: ChEMBL has 2.3M compounds. Even if you only loaded PRMT5 data, it’s massive. LLM context windows cap out at maybe 200K tokens; you can’t fit millions of compound records in there.

4. System Architecture and Implementation

4.1 Architectural Overview

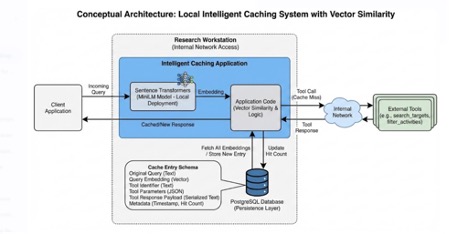

Our implementation delivers semantic caching at the query-response layer, storing LLM-formatted responses alongside tool identifiers, tool parameters, and query embeddings. This approach enables reuse of computational work across semantically equivalent queries without requiring repeated external tool calls.

The system persists query-response pairs, including tool parameters, query embeddings, and LLM-formatted responses, instead of caching model internal states, providing a pragmatic alternative to both response level caching and full key value cache persistence.

Technical Components:

- PostgreSQL is used as the persistence layer;

- vector similarity is computed in application code rather than via pgvector indexes.

- Sentence Transformers using a MiniLM model deployed locally.

- Infrastructure: Research workstation with internal network access

Cache Entry Schema: Each stored entry contains:

- Original query text

- Query embedding (vector representation)

- Tool identifier (e.g., search_targets, filter_activities)

- Tool parameters (JSON format)

- Tool response stored as a structured payload serialized to text.

- Metadata: timestamp, hit count

4.2 Query Processing Pipeline

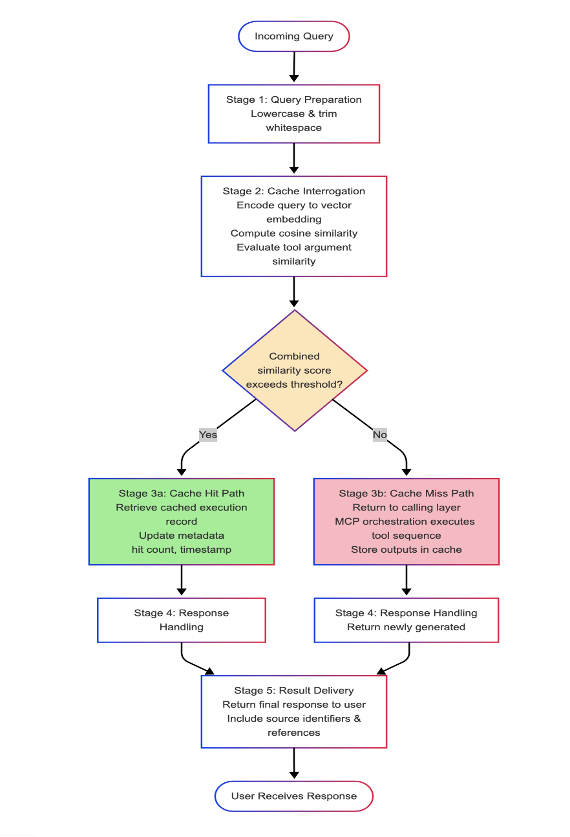

The system processes queries through a five stage workflow designed to enable semantic reuse of prior tool executions while preserving deterministic behavior.

Step 1: Query Preparation: Incoming natural language queries are minimally normalized through lowercasing and whitespace trimming. No explicit semantic rewriting or canonicalization is performed. Queries are preserved in their original form and prepared for embedding based similarity evaluation.

Step 2: Cache Interrogation: The prepared query is encoded into a vector embedding using a locally deployed Sentence Transformers MiniLM model. The embedding is compared against stored query embeddings using cosine similarity. In parallel, tool argument similarity is evaluated to improve matching precision. A combined similarity score is computed and compared against a configurable threshold to determine cache eligibility.

Step 3: Cache Hit Path: When the combined similarity score exceeds the configured threshold, the system retrieves the corresponding persisted tool execution record. The cached response is returned directly without invoking external tools. Cache metadata, including hit count and last accessed timestamp, is updated to support monitoring and lifecycle management.

Cache Miss Path: Cache miss detection is confirmed, as search_cache() returns None when no suitable match is found, and _update_statistics(hit=False) correctly tracks misses. On a cache miss, the query is returned to the calling layer for MCP orchestration and execution of the required tool sequence. Following successful execution, store_cache() & store_query_response() persist the LLM-formatted response, query embedding, tool identifier, tool arguments, and original query text into cag_query_cache for future reuse, which aligns with the documented behavior.

Step 4: Response Handling: The caching layer returns either the cached LLM-formatted response or a newly generated response from the full MCP and LLM pipeline. Any transformation of structured outputs into natural language responses is performed downstream and is outside the scope of the caching implementation.

Step 5: Result Delivery: Final responses are returned to the user. Where provided by upstream tools, responses may include source identifiers and references, enabling downstream validation & reproducibility.

5. Strategic Implementation Decisions

The architecture of this system is defined by three pivotal design choices aimed at balancing computational efficiency with analytical precision.

5.1 Calibration of the Semantic Threshold

To govern the retrieval process, we established a similarity threshold of 0.85. This specific metric was selected following iterative testing of sample queries to ensure the system distinguishes between genuine paraphrases and superficially similar but biologically distinct prompts. For example, the system successfully aligns “PRMT5 inhibitors” with “compounds targeting PRMT5” and recognizes “IC50 < 10 nM" as equivalent to "below 10 nanomolar."Conversely, a lower threshold risks false positives. During manual testing, the system correctly identified 'EGFR inhibitors' as semantically distinct from PRMT5 queries, with cosine similarity scores well below the 0.85 threshold.. Systematic calibration of exact per-pair similarity scores is identified as future evaluation work. While 0.85 serves as a rigorous baseline for our current pilot, we view it as a functional starting point for high-precision research rather than an absolute mathematical optimum.

5.2 Transition from Raw Data to Curated Synthesis

A significant friction point in standard tool-use workflows is the delivery of exhaustive, raw JSON payloads. Our implementation prioritizes “Context Summarization,” where the Large Language Model (LLM) distills complex data arrays into curated executive summaries. Instead of presenting a researcher with a list of 50 unformatted entries, the system identifies the total volume of compounds retrieved such as 52 PRMT5 inhibitors and highlights the top-tier examples with their corresponding IC50 values. This refinement transforms data retrieval into actionable insight.

5.3 Efficiency via Write-Through Caching

To optimize long-term performance, we utilized a write-through caching strategy. Under this model, every cache miss triggers the full Model Context Protocol (MCP) workflow, with the resulting data subsequently stored in PostgreSQL, with query embeddings serialized as binary arrays and similarity computed in application code. While this introduces an initial latency overhead for the first query, it secures a significant speed advantage for all subsequent interactions, effectively front-loading the “computational tax” to benefit the broader research lifecycle.

6. Performance Benchmarks and Validation

Initial testing conducted on research workstations reveals a compelling case for the hybrid model. Our results are based on observed performance during manual testing on a research workstation. Cache misses, which require full MCP tool execution and LLM formatting, were consistently observed to exceed 10 seconds end-to-end. Cache hits, which bypass both steps, were observed at approximately 3.2 seconds. These figures represent qualitative observations from pilot testing rather than statistically validated benchmarks. Systematic performance evaluation with controlled query sets and repeated measurements is identified as necessary future work.

In terms of consistency, the system demonstrates high reliability. Identical queries return identical results, and paraphrased queries remain stable. In one test case, the system successfully matched “aspirin-related compounds” to “aspirin compounds,” validating that the semantic layer effectively captures user intent without compromising data integrity.

7. Strategic Deployment Framework: CAG vs. RAG

A central finding of this initiative is that Cache-Augmented Generation (CAG) is not a replacement for Retrieval-Augmented Generation (RAG); rather, they serve a distinct operational prototype.

7.1 The case for CAG

CAG is most effective when the underlying data is relatively static and the knowledge domain is bounded. It is the preferred approach for repetitive query patterns where consistency is paramount. For instance, a researcher focusing on PRMT5 inhibitors over several days may repeatedly ask about inhibitors, bioavailability, and binding modes. With CAG, the first query result is cached, and subsequent semantically similar queries bypass both the MCP tool call and LLM formatting step entirely.

7.2 The Case for RAG

Conversely, RAG is required when data changes frequently or when query patterns are unpredictable and the domain is unbounded. A computational biologist performing a broad scan across kinases, GPCRs, and ion channels would find little value in a cache, as each query targets a new, unrelated dataset. In these instances, CAG would offer no speed advantage and would risk presenting stale information.

7.3 The Hybrid Operational Model

Our system integrates both approaches through a tiered decision logic: Every query first undergoes a semantic cache check. If the similarity exceeds the 0.85 threshold, the system returns the cached tool execution (CAG behavior).

If no sufficient similarity is found, the system executes fresh tool calls (RAG behavior). New executions are then stored in the cache via the write-through protocol. A notable challenge remains regarding cache invalidation. If external databases are updated, the cached results become stale. Our current mitigation is manual cache clearing, a temporary measure as we refine the automated lifecycle of the data.

8. Conclusion and Future Outlook

From a practical necessity to resolve the latency bottlenecks inherent in complex tool calls, our pilot confirms that query-response caching is a practical asset for reducing latency in iterative research workflows. The system stores tool arguments and query embeddings alongside LLM-formatted responses, providing a partial audit trail of what parameters were used. For a complete audit trail suitable for regulated environments, one that includes the raw API responses the model was presented with, tool-level caching of raw outputs would be required, and is identified as a priority for future development.

We have learned that semantic matching is effective for paraphrase detection, even with simple embedding models. While CAG is not a universal solution, it provides a vital layer of persistent context reuse that complements RAG’s dynamic capabilities. As we continue to test these workflows on research workstations, we remain focused on evolving this hybrid model to support increasingly complex, iterative scientific discovery.