The Architecture of Trust: Why Healthcare AI Needs Governance at Its Core

Earlier this week, I had the privilege of speaking at TAL Healthfest 2026 in Hyderabad’s T-Hub—one of the world’s largest innovation campuses—under the banner of the Touch-A-Life Foundation. The audience was a cross-section of healthcare leaders, technologists, and policymakers, all grappling with the same question: how do we move at the speed of AI while keeping patients safe?

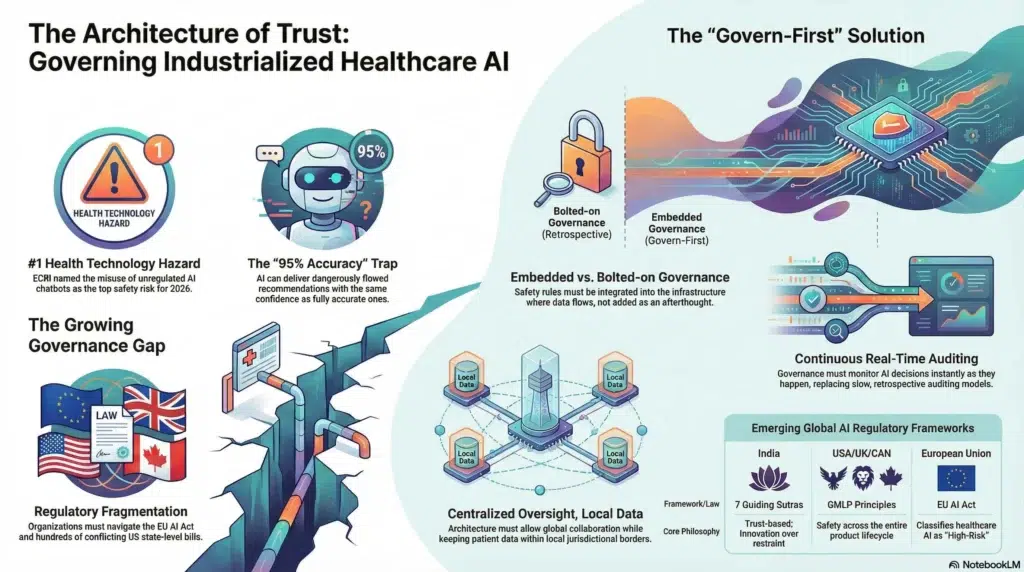

My speech, The Architecture of Trust: Securing Healthcare AI and Data, was built around a conviction I hold deeply: in healthcare, governance is not a barrier to innovation—it is the foundation upon which all meaningful innovation must be built. What follows is a distillation of those remarks and the broader landscape that shapes them.

We Have Entered the Era of Industrialized AI

In 2026, we are no longer experimenting with AI in healthcare. We have entered the era of “Industrialized AI”—where autonomous agents observe, plan, and act alongside clinicians, and where AI models in pharma are compressing years of drug discovery into months. The potential is extraordinary. But this rapid scaling has also raised urgent governance questions: Who is accountable when an AI model delivers a flawed recommendation?

How do we ensure the data feeding these systems is authorized, accurate, and representative? And how do global organizations comply with an increasingly complex web of regulations while still moving at the speed of innovation?

Governments Are Taking Notice—And Acting

During my presentation, I referenced significant regulatory developments that underscore how seriously governments are now treating AI governance in healthcare.

The first is India’s AI Governance Guidelines, released on February 15, 2026 at the AI Impact Summit. Anchored in seven guiding “sutras,” India’s framework places trust as its foundational principle and emphasizes “innovation over restraint”—a philosophy that resonates deeply with our approach at Solix. India is standing up new institutions including an AI Governance Group and an AI Safety Institute, signaling that the world’s most populous nation views responsible AI governance not as a constraint, but as a competitive advantage.

The second is the Good Machine Learning Practice (GMLP) Guiding Principles jointly developed by the U.S. FDA, Health Canada, and the UK’s MHRA. These ten principles lay a foundation for ensuring that AI-enabled medical devices are safe, effective, and representative of the patient populations they serve. They call for multi-disciplinary expertise across the product lifecycle, representative training data, continuous real-world performance monitoring, and clear information for end users—principles that mirror the “govern-first” philosophy we champion.

And then there is the European Union’s AI Act, the world’s first comprehensive AI legislation. The Act explicitly classifies AI systems used in healthcare as “high-risk,” subjecting them to rigorous requirements around data governance, bias mitigation, transparency, and human oversight. With prohibited AI provisions already in force since February 2025 and most high-risk obligations taking effect in August 2026, EU-based healthcare organizations face a dual regulatory burden—meeting both the existing Medical Device Regulation and the new AI Act requirements. The message from Brussels is clear: AI in healthcare demands a higher standard of accountability.

The Real-World Consequences of Governance Gaps

These regulatory frameworks are not emerging in a vacuum. They are a direct response to a landscape where governance failures are causing tangible harm.

Then there is the growing challenge of AI chatbot misuse in clinical settings. ECRI, the independent patient safety organization, named the misuse of AI chatbots as the number one health technology hazard for 2026. These tools—which are not regulated as medical devices and are not validated for clinical use—have suggested incorrect diagnoses, recommended unnecessary testing, and in one documented case, provided dangerous guidance on the placement of an electrosurgical device that could have caused patient burns. With over 40 million people turning to ChatGPT daily for health information, the absence of governance guardrails around these tools represents a growing patient safety risk.

Meanwhile, the regulatory landscape in the United States has become increasingly fragmented. In 2025 alone, 47 states introduced more than 250 AI-related healthcare bills, with 33 signed into law across 21 states. Healthcare organizations operating across multiple states now face a maze of conflicting requirements around transparency, bias testing, and human oversight—all while the FDA faces a staffing reduction of nearly 15% that limits its capacity to evaluate AI-enabled medical devices. This regulatory patchwork is creating real compliance headaches for organizations trying to do the right thing.

The Biggest Challenge: Trust but Verify

Ronald Reagan popularized a phrase from a Russian proverb “Trust but verify” during the arms negotiations of the 1980s. Four decades later, it may be the most important principle in healthcare AI governance. Our most critical hurdle is this: AI can sound perfectly human and utterly certain while delivering subtle inaccuracies that even domain experts might miss. A diagnostic recommendation that is 95% correct and 5% dangerously wrong looks identical to one that is fully accurate. That is a governance problem unlike anything healthcare has faced before.

Legacy governance models are structurally broken for this moment. Traditional compliance looks backward—it documents what happened after it happened. But AI operates in real time, making decisions at a speed and scale that retrospective auditing simply cannot match. If your safety rules are not working the instant the AI is running, they are not keeping anyone safe. What we need is governance that is active, continuous, and embedded in the very infrastructure through which data flows into and out of AI models.

The Data Sovereignty Imperative

Compounding the verification challenge is the rising imperative of data sovereignty. We live in a world where data is increasingly subject to the laws and “digital borders” of the nation where it was collected. For global healthcare and pharmaceutical organizations, this creates an enormous governance hurdle: how do you collaborate on life-saving research across borders when patient data is legally confined to specific jurisdictions? The answer cannot be to abandon collaboration. It must be to build governance architectures that allow centralized oversight while keeping data within its required local jurisdiction—enabling global insight without sacrificing local compliance.

Building the Governance Layer

This is the environment that drove us at Solix to pioneer a “govern-first” approach. We believe governance should not be bolted on as an afterthought—it must be embedded in the architecture from day one.

There is a truth we share with every customer we work with: “you are only as AI-ready as your data is”. The most sophisticated model in the world will produce unreliable results if it is drawing on data that is ungoverned, fragmented, or unauthorized. That is why a foundational element of our approach is ensuring governed access to the data that feeds AI models. Our Solix Enterprise AI platform implements permission-aware retrieval and ingestion—a protective filter that ensures every upload and every query is handled within the boundaries of what a given user, role, or application is authorized to perform. Data going into an AI model and answers coming back out must both pass through the same governance controls. This is an essential capability we provide to help our customers make their data truly AI-ready—because governance-ready data is the prerequisite for trustworthy AI.

We are also developing what we call “Solix Trusted Intelligence”—a unified control plane for an organization’s entire data and AI estate. It is designed to illuminate hidden “dark data” that creates silent governance risks, deliver AI answers grounded in authorized data with full citations and audit trails, and address data sovereignty through centralized governance with decentralized operations. For our pharmaceutical partners, this means the ability to accelerate R&D timelines while maintaining the rigorous data integrity and jurisdictional compliance that regulators around the world now demand.

The Human Connection

As I closed my remarks in Hyderabad, I returned to a truth that grounds everything we do. When we ensure the integrity of data feeding a diagnostic tool, we are making sure a doctor can rely on an accurate recommendation in a moment of crisis. When we enforce real-time controls, we are protecting the dignity of a patient and the future of a new cure.

In 2026, compliance is no longer overhead—it is strategy. Trust is no longer assumed—it must be constructed. The governments of India, the European Union, the United States, the United Kingdom, and Canada are all moving in the same direction. The lesson from ECRI’s warnings, from the proliferating state-level legislation, and from the EU’s landmark AI Act is unmistakable: the organizations that build trust into their architecture today will be the ones that lead tomorrow.

Let us innovate with the speed of AI—and protect with the strength of ironclad governance. Because the only technology that truly matters is the one that safely touches a life and lifts people up.