Zero Data Copy in an AI warehouse is an architectural approach that allows AI models, analytics, and applications to access and process data directly where it resides across clouds, regions, and systems without physically moving, duplicating, or replicating that data into the warehouse’s own storage layer. It relies on policy aware, federated access, strong data governance, and metadata-driven control to minimize duplication, reduce risk and cost, and ensure that AI workloads remain compliant, performant, and trustworthy.

What Is Zero Data Copy in an AI Warehouse?

Zero Data Copy in an AI warehouse describes a govern-first, federated data access model where the warehouse functions as an AI-native control and semantics layer over distributed data estates rather than as yet another place where data is copied and stored. Instead of centralizing data through traditional ETL or bulk replication, the AI warehouse uses metadata, semantic enrichment, and policy-driven connectors to query, join, and analyze data in place whether that data lives in data lakes, operational systems, vector databases, or third-party platforms.

In this model, data remains within its original systems of record, but is exposed to AI and analytics workloads through secure, governed mechanisms such as federated queries, virtualized tables, and external objects that enforce access controls and data sovereignty requirements at the source. An AI warehouse that supports Zero Data Copy treats metadata as a first-class asset, using unified catalogs, lineage, and classification to make distributed data AI-ready without adding redundant copies that increase risk, cost, and complexity.

What Is “Zero Data Copy” in Practice?

Zero Data Copy is often associated with “zero ETL” and “warehouse-native” architectures, where downstream tools and AI applications operate directly on a single, governed source of truth instead of creating additional data silos. In an AI warehouse context, Zero Data Copy takes this concept further by aligning AI-specific requirements such as model training, retrieval augmented generation (RAG), vector search, and real-time inference with federated data access patterns.

A Zero Data Copy AI warehouse typically provides:

- Federated, policy-aware data access to multiple data sources (transactional systems, data lakes, files, unstructured content, and external SaaS platforms) without physically ingesting those datasets into the warehouse’s internal storage.

- Virtualization and semantic modeling, where logical views, and knowledge graphs sit on top of physical data, allowing AI models to reason over unified context even though the data physically resides in different locations.

- Unified metadata and governance, which capture lineage, classification, sensitivity, and sovereignty attributes for all connected data assets, ensuring that AI workloads always respect regulatory and security policies at query time.

In operational terms, an AI warehouse with Zero Data Copy can support use cases like enterprise search, generative AI assistants, AI-driven analytics, and agentic automation without triggering a proliferation of un-managed data copies across clouds and tools. This makes the architecture especially attractive for highly regulated, data-intensive industries such as banking, healthcare, public sector, and life sciences where data movement directly translates to compliance exposure and infrastructure overhead.

Why Is Zero Data Copy Important?

Zero Data Copy is important because it reshapes how enterprises architect AI platforms around governance, cost, agility, and trust, particularly as data volumes, modalities, and regulatory expectations surge. By eliminating unnecessary duplication and relying on strong metadata and policy controls, organizations can scale AI initiatives faster while maintaining compliance and operational control across complex, multi-cloud data estates.

Key Benefits of Zero Data Copy in an AI Warehouse

Reduced Data Movement and Storage Costs

- Avoids the need to replicate large datasets into each AI tool, warehouse, and analytics platform, significantly reducing cloud storage and data transfer spending.

- Minimizes overhead associated with ETL pipelines, sync jobs, and backup environments for duplicate copies of the same data.

Stronger Data Governance and Compliance

- Keeps sensitive data within its originating systems and jurisdictions, helping enterprises meet data sovereignty and sector-specific rules (e.g., GDPR, HIPAA, and regional regulations).

- Centralizes governance policies at the data layer so that AI workloads automatically inherit access controls, masking, and audit trails from the underlying platforms.

Improved Security and Reduced Risk

- Fewer physical copies mean fewer attack surfaces, fewer systems to harden, and fewer uncontrolled exports of sensitive information.

- Real-time, policy-aware access ensures that data exposure is time-bound, contextual, and traceable by design.

Real-Time and Fresher AI Insights

- Bypasses batch synchronization delays so AI models and analytics always operate on the latest data streaming in from operational systems, logs, and events.

- Enhances the accuracy and timeliness of AI-driven decisions, predictions, and recommendations because there is no lag between data creation and AI consumption.

Architectural Simplicity and Agility

- Reduces the complexity of building and maintaining point-to-point pipelines, transformations, and reconciliation processes across multiple tools.

- Makes it easier to plug in new AI services, agents, and applications that can immediately work with governed, in-place data via federated interfaces.

Optimized for AI-Driven Workloads

- Supports AI-native features like vector search, semantic enrichment, and RAG while still honoring security and compliance controls defined at the data layer.

- Aligns with the shift toward AI-native data platforms that treat metadata and semantics as core components of the architecture, not afterthoughts.

Challenges and Best Practices for Businesses

Including a section on “Challenges and Best Practices for Businesses” fits the purpose of this glossary-style article because it helps practitioners translate the core definition and benefits of Zero Data Copy into practical implementation guidance, while reinforcing Solix’s authority on how to operationalize the concept within an AI warehouse. This section deepens the educational value and supports EEAT by addressing real-world constraints and actionable recommendations.

Key Challenges with Zero Data Copy in AI Warehouses

Complex, Fragmented Data Estates

- Many enterprises operate across multiple clouds, regions, legacy systems, and line-of-business applications, making it difficult to standardize federated access, semantics, and governance in a uniform way.

- Inconsistent schemas, incomplete metadata, and siloed ownership models can limit the ability of AI workloads to reliably query data in place at scale.

Performance and Cost Management for Federated Queries

- Running complex, cross-source queries in real time can introduce latency and unpredictable compute costs if not carefully designed and optimized.

- AI workloads such as training and RAG retrieval may require careful tiering and caching strategies to balance Zero Data Copy principles with performance SLAs.

Governance, Policy, and Access Control Complexity

- Centralizing governance while supporting decentralized operations and regional sovereignty requires robust policy modeling, RBAC, and continuous auditing across systems.

- Misaligned or inconsistent policies across source systems may undermine the promise of a single, govern-first control plane for AI workloads.

Metadata Quality and Coverage Gaps

- Zero Data Copy relies heavily on accurate, comprehensive metadata about data location, lineage, sensitivity, and semantics to make federated access safe and effective.

- Legacy environments often lack standardized catalogs, automated discovery, or classification, creating blind spots that can limit AI readiness and compliance.

Organizational Readiness and Skills

- Shifting from copy-based pipelines to a govern-first, federated AI warehouse requires new skills across data engineering, governance, security, and AI operations.

- Business units may be accustomed to owning local data copies, reports, and extracts, and may need change management to adopt centralized controls.

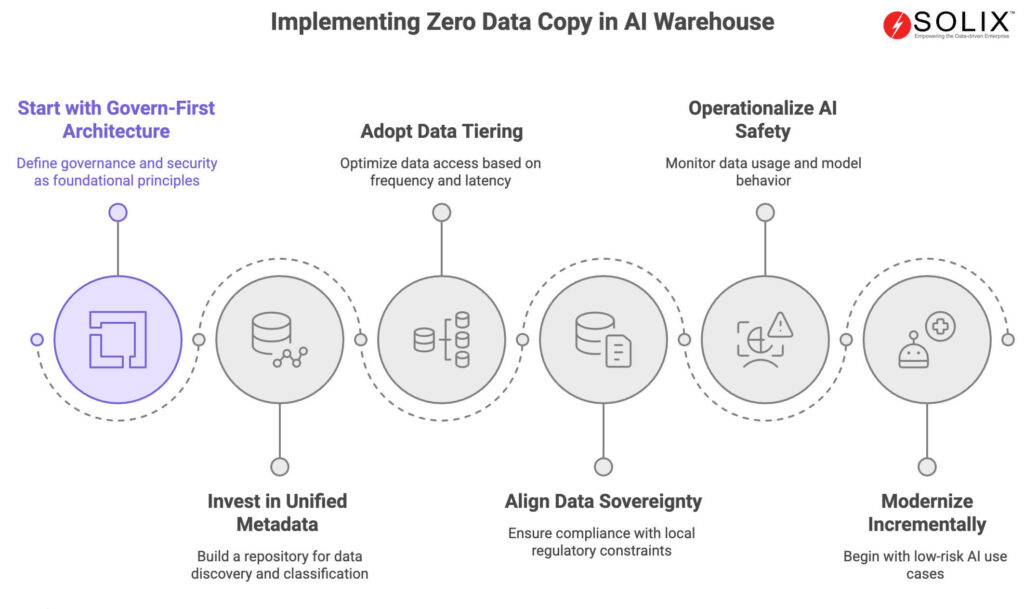

Best Practices for Adopting Zero Data Copy

Start with a Govern-First Architecture

- Define governance, security, and compliance as foundational design principles, not as add-ons after the AI warehouse is deployed.

- Implement centralized policy management, unified auditing, and consistent RBAC across all federated data sources before scaling sensitive AI use cases.

Invest in Unified Metadata and Classification

- Build a unified metadata repository that automatically discovers, tags, and classifies structured and unstructured data across the enterprise.

- Enrich metadata with business taxonomies, ontologies, and knowledge graphs so AI models and agents can interpret context and meaning without additional copies.

Adopt Data Tiering and Access Optimization

- Use data tiering (hot, warm, cold) and workload-aware routing so that frequently accessed, latency-sensitive data is optimized for AI workloads without violating Zero Data Copy principles.

- Combine federated access with intelligent caching, sampling, and pre-computed aggregates for heavy AI analytics while keeping authoritative records in place.

Align Data Sovereignty and Federated Controls

- Implement federated governance that respects local regulatory constraints while enabling global insight through aggregated or anonymized outputs.

- Ensure regional policies, masking rules, and residency constraints are enforced programmatically at query time for all AI workloads.

Operationalize AI Safety and Observability

- Monitor data usage, model behavior, and lineage across all AI workloads accessing federated data, including training, evaluation, and inference.

- Establish feedback loops and human-in-the-loop validation for high-risk decisions so that AI operations remain transparent, explainable, and auditable.

Modernize Incrementally with Reference Use Cases

- Begin with high-impact, low-risk AI use cases such as governed enterprise search, knowledge assistants, or analytics over archived data before expanding to mission-critical processes.

- Use these early projects to validate governance models, performance assumptions, and organizational roles before scaling Zero Data Copy patterns across the enterprise.

How Solix Helps with Zero Data Copy in an AI Warehouse

Solix Technologies delivers an AI-native warehouse platform that embeds Zero Data Copy as a core design principle, enabling enterprises to unify AI, governance, and analytics without proliferating data copies across tools and clouds. The Solix AI Warehouse is positioned as a fourth-generation data platform that unifies structured and unstructured data under a robust information architecture, with metadata, governance, and AI semantics at its core.

Govern-First, AI-Native Warehouse

Solix AI Warehouse is built as an AI-native data platform that treats governance, security, and compliance as the foundation for every AI workload, from training and RAG to agentic automation. With embedded lineage, auditing, and policy-driven controls, Solix ensures that all federated data access including Zero Data Copy scenarios adheres to enterprise and regulatory requirements across multi-cloud environments.

Zero Data Copy as a Core Principle

Solix explicitly defines Zero Data Copy within its AI Warehouse, enabling direct, policy-aware access to data where it resides, instead of enforcing full replication into the warehouse. This allows enterprises to minimize duplication, mitigate compliance risk, and control costs while still unlocking AI-driven value from existing data investments.

Unified Metadata and AI Semantics

Solix positions metadata as “the core” of fourth-generation AI data platforms, providing a unified metadata service that spans all connected data estates, vector stores, and AI agents. This unified metadata repository automatically discovers, tags, and classifies both structured and unstructured assets, enabling intelligent data products that are AI-ready without the need for additional copies.

Enterprise AI and AI Governance

Beyond the AI Warehouse itself, Solix provides Enterprise AI and AI Governance capabilities that extend Zero Data Copy principles across the AI lifecycle. By integrating governance with generative AI, vector search, and multi-modal data management, Solix helps organizations build AI solutions that are explainable, compliant, and production-ready.

Why Solix Is a Leader in Zero Data Copy for AI Warehouses

Solix Technologies stands out as a leader in Zero Data Copy for AI warehouses because it combines a fourth-generation, AI-native architecture with deep experience in data archiving, cloud data management, and governance across regulated industries. The platform unifies archiving, data lakes, content services, and Enterprise AI into a common data platform that is explicitly designed to make enterprise data AI-ready while minimizing movement and duplication.

Frequently Asked Questions (FAQs) about Zero Data Copy in an AI Warehouse

What does Zero Data Copy mean in an AI warehouse?

Zero Data Copy in an AI warehouse means AI and analytics workloads access and process data directly where it resides, without physically moving or replicating it into the warehouse’s own storage, using federated, policy-aware controls.

How does Zero Data Copy reduce AI project risk?

Zero Data Copy reduces AI project risk by minimizing uncontrolled data duplication, keeping sensitive information in governed source systems, and ensuring that security, access controls, and audit trails are consistently enforced at the data layer.

Why is Zero Data Copy important for data sovereignty?

It is important for data sovereignty because it allows organizations to comply with regional residency and privacy regulations by leaving data in its original jurisdiction while still delivering global AI insights through federated queries and aggregated outputs.

What are the main benefits of Zero Data Copy in AI analytics?

Key benefits include lower storage and transfer costs, fewer data silos, stronger governance and compliance, real-time access to fresher data, and simpler architectures for deploying AI-driven analytics at scale.

How does Solix AI Warehouse support Zero Data Copy?

Solix AI Warehouse supports Zero Data Copy through federated, policy-aware access to distributed data sources, unified metadata and AI semantics, data tiering across multi-cloud environments, and a govern-first architecture tailored for AI workloads.

Can Zero Data Copy work with unstructured and multi-modal data?

Yes, Zero Data Copy can extend to unstructured and multi-modal data when the AI warehouse provides unified metadata, semantic enrichment, and vector search capabilities that allow AI models to work with documents, images, video, and sensor data without unnecessary replication.

What challenges do enterprises face when implementing Zero Data Copy?

Typical challenges include fragmented data estates, inconsistent governance policies, performance tuning for federated queries, gaps in metadata coverage, and the need for new skills and processes aligned with govern-first AI architectures.

How do Zero Data Copy and AI governance relate?

Zero Data Copy and AI governance are closely linked because both rely on centralized policies, metadata, lineage, and auditing to ensure that AI models only access data in compliant, transparent, and explainable ways across the entire AI lifecycle.